Understanding and analyzing IP data has become paramount for businesses, cybersecurity experts, and marketers alike.

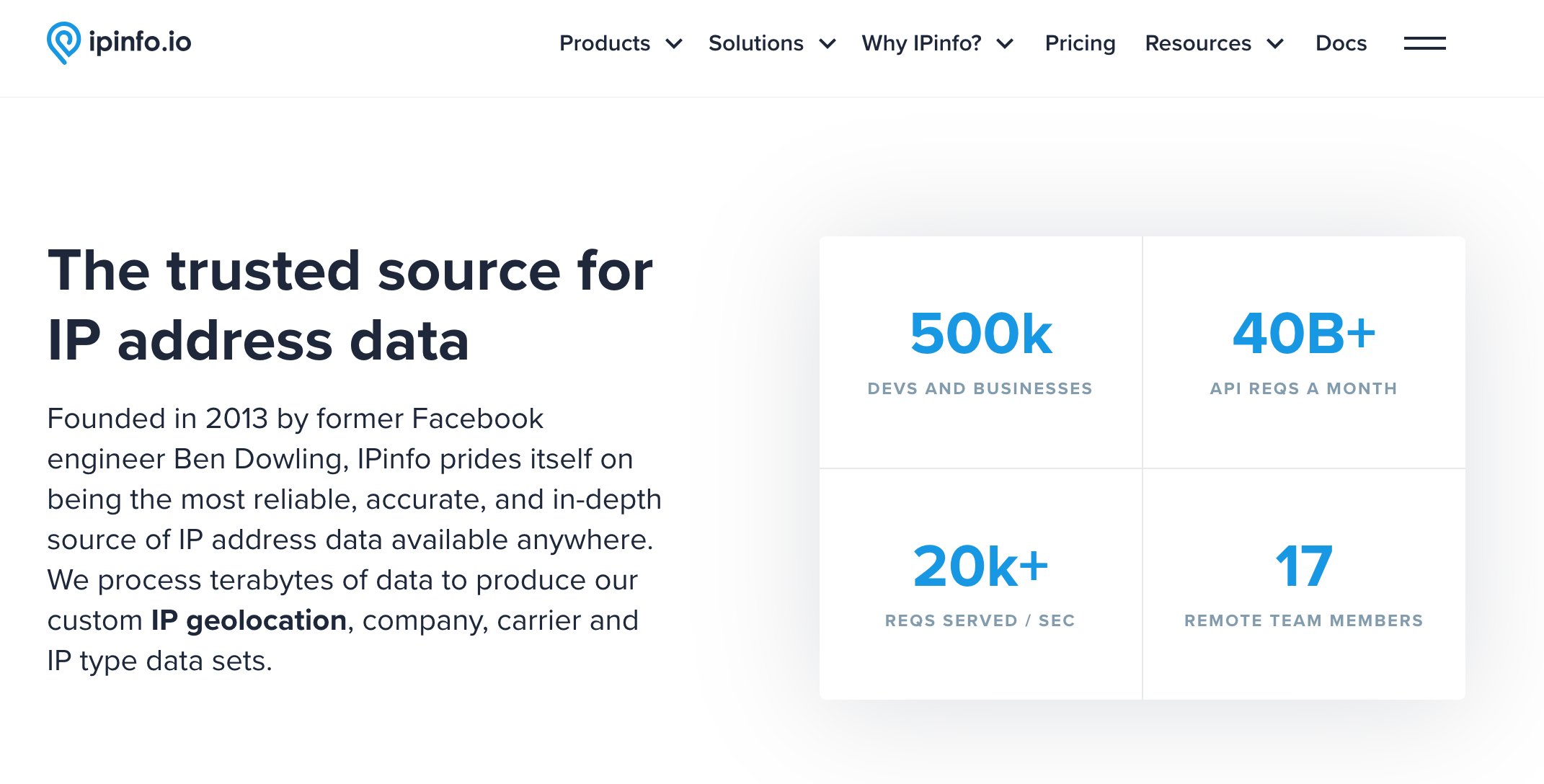

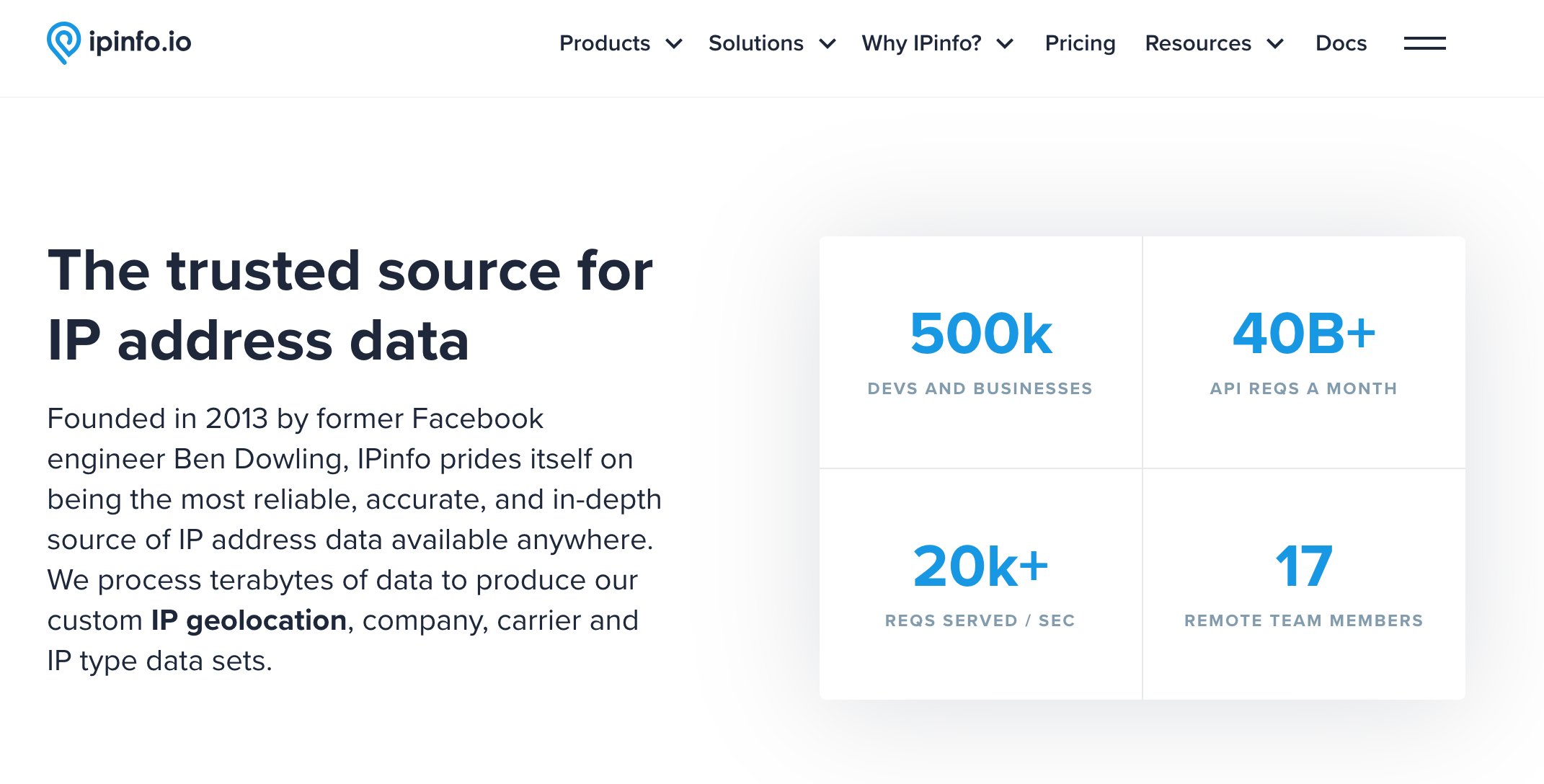

At the forefront of this revolution is IPinfo.io, a comprehensive platform that has established itself as a trusted source for IP address data.

With a vast array of features ranging from geolocation services to privacy detection, IPinfo.io provides insights that drive decision-making processes in various industries.

Understanding IPinfo.io and Its Significance

Every device, every user, and every interaction leaves a digital footprint.

Central to this footprint is the IP address, a unique identifier that provides insights into user behavior, location, and more.

IPinfo.io is a platform designed to decode the stories these IP addresses tell.

What is IPinfo.io?

IPinfo.io is more than just a platform; it's a comprehensive tool that offers in-depth information about IP addresses.

Founded in 2013, IPinfo.io has grown exponentially, serving millions of users and businesses worldwide.

Its primary mission is to transform raw IP data into actionable insights, aiding industries ranging from cybersecurity to marketing in making informed decisions.

IPinfo.io Features and Offerings

-

Geolocation. With just an IP address, IPinfo.io can pinpoint the exact geographical location of a user and the location details like postal codes, city, region, country, and even precise latitude and longitude coordinates.

Such granularity is invaluable for businesses looking to tailor their services based on user location or for security professionals monitoring potential threats.

-

ASN (Autonomous System Numbers). IPinfo.io provides detailed information about the ownership of IP address blocks.

By identifying the ASN, users can determine which organization is responsible for a particular set of IP addresses, offering insights into traffic sources and potential partnerships.

-

Privacy Detection. IPinfo.io's privacy detection feature can identify if an IP address is associated with VPNs, proxies, or Tor services.

Photo from Vecteezy

Such detection is crucial for businesses aiming to ensure genuine user interactions and for security experts monitoring masked activities.

Beyond these core features, IPinfo.io offers a plethora of other services, such as company information, mobile carrier detection, abuse contact details, and IP whois data.

What Makes IPinfo.io A Standout

While there are numerous IP data providers in the market, IPinfo.io distinguishes itself in several ways:

Photo by Vecteezy

- Accuracy and Reliability. IPinfo.io aggregates information from multiple sources and updates it daily to ensure that users receive the most up-to-date and reliable insights.

- User-Friendly Interface. Whether you're a tech-savvy developer or a business professional, IPinfo.io's intuitive interface ensures that you can access the data you need without a steep learning curve.

- Scalability. IPinfo.io is built to cater to a diverse user base, from individual developers to large enterprises,. Its robust infrastructure, backed by Google Cloud, ensures seamless performance even at high request volumes.

- Reputation. Over the years, IPinfo.io has garnered trust from over 400,000 users, developers, and businesses. Its commitment to transparency, data integrity, and user satisfaction has cemented its position as a leader in the IP data industry.

IPinfo.io is not just another IP data provider; it's a comprehensive solution that offers unparalleled insights, making it an invaluable asset for anyone looking for comprehensive IP address data.

IPinfo.io's Significance in the IP Data Industry

The Rising Trend of Scraping IP Data

As the demand for IP data surges, so does the trend of extracting data from such platforms.

Photo from Vecteezy

Scraping IP data can offer numerous applications. For instance, businesses might scrape IP data to understand their audience demographics better, while cybersecurity professionals might do so to monitor potential threats.

But it's not just about extracting data; it's about doing so efficiently, accurately, and at scale.

Ethical Considerations in Scraping IPinfo.io

Yet, with the benefits come challenges. The act of scraping, especially without permission, can raise ethical concerns.

It's crucial to consider the implications of unauthorized data extraction, both from a moral standpoint and in terms of compliance.

IPinfo.io, like many platforms, has terms of service that outline what is permissible and what isn't when it comes to accessing its data.

Ignoring these terms can lead to legal repercussions and tarnish the reputation of entities involved.

In this context, it becomes vital for anyone looking to scrape IPinfo.io, or any platform for that matter, to tread carefully.

Understanding the platform's significance, the rising trend of IP data scraping, and the ethical boundaries is the first step in this journey.

Why Scrape IPinfo.io?

In the digital landscape, data is the new gold. Every byte of information holds the potential to unlock insights, drive decisions, and catalyze innovation.

Photo from Pexels

Accurate IP address data, in particular, are treasure troves that reveal nuances about user behavior, location, and preferences.

IPinfo.io, with its vast repository of IP-related information, emerges as a prime candidate for data extraction.

Benefits of Extracting IP Data

-

Granular Insights. IP data isn't just about numbers and dots. It's about understanding user demographics, preferences, and behavior.

By scraping IPinfo.io, businesses and individuals can access detailed geolocation data, privacy detection metrics, and more, offering a granular view of their audience.

-

Enhanced Decision Making. With accurate IP data at their fingertips, businesses can make informed decisions.

Whether it's for tailoring marketing campaigns based on user location or enhancing cybersecurity measures, IP data provides invaluable insights.

-

Cost-Efficiency. While IPinfo.io offers premium services, scraping can sometimes be a cost-effective way for businesses to access large volumes of data without incurring recurring subscription costs.

However, it's crucial to balance cost savings with ethical considerations.

-

Automation and Scalability. Automated scraping tools can extract vast amounts of data in a short time, allowing businesses to scale their data acquisition efforts efficiently.

Photo from Vecteezy

Use Cases

- Cybersecurity. By understanding the origin of traffic, security professionals can detect potential threats like fraudulent traffic, identify malicious IP addresses, and fortify their defense mechanisms to protect internet users.

- Web Personalization. For online businesses, user experience is paramount. By leveraging IP data, e-commerce platforms, and content providers can personalize web content based on user location, enhancing engagement and conversion rates.

- Financial Technology. IP data aids in fraud detection in the fintech sector. By analyzing IP addresses, financial institutions can identify suspicious transactions, verify user authenticity, and ensure secure online banking experiences.

- Ad Targeting. IP data offers advertisers a way to target ads more effectively. By understanding user demographics and locations, advertisers can tailor ads to resonate with the customer journey of specific audiences, maximizing ROI.

Real-World Applications and Success Stories

- E-Commerce Localization. Leading e-commerce platforms have leveraged IP data to localize their content, offering region-specific product listings, prices, and offers. Such personalization has led to increased sales and customer satisfaction.

- Threat Detection. Cybersecurity firms have successfully used IP data to detect and neutralize threats from specific IP ranges, safeguarding businesses from malicious activity and potential cyber-attacks.

- Banking Security. Several banks have incorporated IP data into their security protocols. By analyzing login IP addresses, they've been able to flag and prevent unauthorized account access, ensuring customer trust.

- Marketing Campaigns. Brands have harnessed IP data to launch location-specific marketing campaigns, resulting in higher engagement and conversion rates. For instance, a brand might launch a winter wear campaign targeting IP addresses from colder regions.

In essence, scraping IPinfo.io isn't just about data extraction; it's about harnessing the power of IP data to drive innovation, enhance user experiences, and foster business growth. As the digital world continues to evolve, the significance of IP data, and platforms like IPinfo.io, will only amplify.

Tools & Techniques for Scraping

Photo from Vecteezy

Photo from Vecteezy

The tools and techniques used to extract data play a pivotal role in determining the efficiency, accuracy, and value of the scraped data. Here are some tools you can use in streamlining the IPinfo.io scraping process

1. IPinfo CLI

The IPinfo Command-Line Interface (CLI) is atool designed specifically for interacting with IPinfo.io. It's not just a mere interface; it's a gateway to the vast repository of IP data that IPinfo.io houses.

Features:

- Versatility. The IPinfo CLI is versatile, allowing users to fetch details about an IP address, ASN, or even a range of IP addresses directly from the command line.

- Efficiency. With the CLI, users can quickly retrieve data without the need for complex scripts or manual data extraction. It's designed for speed, ensuring that users get the information they need in real-time.

- Advanced Features. Beyond basic IP lookups, the CLI offers advanced features like batch processing, allowing users to query multiple IP addresses simultaneously. Additionally, it provides options to format the output, making data integration and analysis seamless.

2. grepip for IP Filtering

grepip is a reliable command-line utility tailored for IP address parsing and filtering, making it an invaluable asset for those dealing with vast datasets.

- Precision. grepip allows users to filter IP addresses based on specific criteria, ensuring that only relevant data is extracted.

- Flexibility. Whether you're dealing with IPv4 or IPv6 addresses, grepip handles both with ease. It also supports CIDR notation, allowing users to filter IP addresses within specific ranges.

- Integration. grepip can be seamlessly integrated into data extraction pipelines, working in tandem with other tools to streamline the scraping process.

3. Third-Party Integration: Pipedream

While the IPinfo CLI and grepip are powerful in their own right, sometimes the scraping process requires third-party integrations such as Pipedream come into play.

Pipedream is an integration platform that offers a seamless way to integrate IPinfo.io with other platforms, automate data extraction workflows, and enhance data processing capabilities.

Pipedream's visual workflow builder allows users to design complex data extraction and processing workflows without writing a single line of code.

It supports triggers, actions, and a plethora of pre-built integrations, making it a versatile tool for IP data scraping.

Whether you're scraping data for a small project or a large enterprise, Pipedream scales to meet your needs, ensuring that data extraction remains smooth regardless of the volume.

Ethical Considerations & Best Practices in Scraping IPinfo.io

The line between what's permissible and what's not can often blur, when it comes to data scraping.

While it's tempting to gather as much datasets as possible, scraping comes with a sense of responsibility and ethics.

Here's a deep dive into the ethical considerations and best practices one should adhere to when scraping IPinfo.io or any other platform.

Photo from Vecteezy

Respecting Terms of Service and Privacy Policies

-

Legal Implications. Every platform, including IPinfo.io, has a set of terms of service (ToS) that users agree to when accessing its services.

These terms often include clauses related to data scraping. Ignoring or violating these terms can lead to legal repercussions, tarnishing the reputation of the scraper and potentially leading to hefty fines.

-

Moral Responsibility. Beyond legalities, there's a moral obligation to respect the platform's terms. After all, platforms like IPinfo.io invest significant resources in curating and maintaining their data.

Unauthorized scraping can be seen as a breach of trust and an infringement on the platform's intellectual property.

-

Privacy First. In addition to the ToS, privacy policies outline how user data is handled and protected. Ethical scraping ensures that any data extracted respects the privacy of individuals, avoiding any personal or sensitive information.

Avoiding Bans and Understanding Rate Limits

-

Stealth and Moderation. IPinfo.io and similar platforms often have mechanisms to detect and block bot-like activities.

To avoid bans, it's advisable to mimic human behavior by introducing delays between requests and rotating IP addresses if necessary.

Geonode residential proxies allow users to emulate organic traffic, thereby bypassing detection systems and maintaining uninterrupted scraping operations.

-

Rate Limits. Most platforms, including IPinfo.io, impose rate limits on the number of requests a user can make within a specific timeframe.

Respecting these limits is not just about avoiding bans; it's about ensuring fair access to the platform's resources for all users.

-

Feedback Loops. Some platforms provide feedback on scraping activities, alerting users when they're nearing rate limits.

Being responsive to such feedback ensures continuous access and fosters a positive relationship with the platform.

The Importance of Not Overloading Servers

-

Shared Resources. Every request made to a platform consumes its server resources.

Excessive scraping can overload these servers, degrading the platform's performance and potentially causing outages.

-

Ethical Considerations. Overloading servers is not just a technical issue; it's an ethical one.

By consuming more than one's fair share of resources, aggressive scrapers can deprive other users of the service, leading to a subpar experience for them.

-

Best Practices. To avoid overloading servers, it's advisable to spread out scraping activities over time, avoid peak usage hours, and monitor server response times.

If a server seems slow or unresponsive, it's a sign to throttle back and reduce the scraping intensity.

While scraping offers immense potential, it comes with its set of responsibilities.

Adhering to ethical considerations and best practices ensures that data extraction is not only efficient but also respectful, legal, and morally sound.

Scraping Ipinfo.io with GitHub Repository

GitHub is a web-based platform used for version control and collaboration, allowing multiple people to work on projects simultaneously. It is widely used by programmers to store, share, and version control their code.

GitHub hosts millions of repositories that offer solutions to a myriad of problems, including IPinfo.io scraping. Here isa general step-by-step guide using GitHub repositories as a reference:

Step 1: Research on GitHub

- Search GitHub for repositories related to scraping IPinfo.io.

- Look for repositories with good documentation, recent updates, and positive feedback from other users.

Step 2: Clone the Repository

- After identifying a suitable repository, clone it to your local machine using the

git clone [repository URL] command.

Step 3: Set Up Your Environment

- Ensure you have the necessary software and libraries installed as specified in the repository's documentation.

- This might include tools like Python, BeautifulSoup, and the

requests library.

Step 4: Review the Code

- Before running any script, always review the code to understand its functionality and ensure it doesn't contain any malicious elements.

- Check for any rate-limiting or delay mechanisms in the code to avoid overloading IPinfo.io's servers.

Step 5: Run the Scraper

- Execute the script as instructed in the repository's documentation.

- Monitor the script's progress and watch for any errors or issues.

Step 6: Store the Data

- Most scrapers will save the extracted data to a file or database.

- Ensure you have the necessary storage set up, whether it's a local CSV file, a database, or cloud storage.

Step 7: Analyze and Use the Data

- Analyze, visualize, or integrate the data into your applications.

- Always ensure that the data's usage complies with IPinfo.io's terms of service and any applicable laws.

Step 8: Stay Updated

- Regularly check the GitHub repository for updates or improvements to the scraping script.

- Websites often update their structures, which can break scrapers. Staying updated ensures your scraper remains functional.

Step 9: Consider Alternatives

- Instead of scraping, consider using IPinfo.io's official API, which provides a more efficient and ethical way to access the data.

- Many platforms offer APIs as a way to access their data without scraping, ensuring you get accurate and up-to-date information.

People Also Ask

How does IPinfo.io detect scraping attempts?

Like many sophisticated online platforms, IPinfo.io employs the following mechanisms to detect and thwart unauthorized scraping attempts:

- Rate Limit Monitoring. If an IP address sends requests at a frequency that surpasses the platform's rate limits, it's a clear indic ation of a potential fraudulent user's scraping attempt.

- User-Agent Analysis. By analyzing the User-Agent strings of incoming requests, IPinfo.io can identify patterns typical of scraping tools and bots, differentiating them from regular browsers.

- Behavioral Analysis. Automated scraping tools often exhibit behavior patterns that are distinct from human users, such as accessing multiple pages in quick succession or navigating the site in a predictable pattern. Such behaviors can trigger IPinfo.io's detection mechanisms.

- CAPTCHA Challenges. If suspicious activity is detected, IPinfo.io might present a CAPTCHA challenge to verify that the user is human and not an automated bot.

Photo from Vecteezy

Are there any legal implications of scraping IPinfo.io?

Scraping IPinfo.io carries legal implications due to certain factors. The data on IPinfo.io is protected by copyright laws, and unauthorized extraction or use could be considered a breach.

In certain jurisdictions, unauthorized access to online platforms, including scraping, might be considered a violation of laws like the Computer Fraud and Abuse Act (CFAA).

Depending on the nature and use of the scraped data, this activity might intersect with data privacy regulations like the General Data Protection Regulation (GDPR) or the California Consumer Privacy Act (CCPA).

How can I use the data scraped from IPinfo.io ethically?

Using data ethically is paramount, especially in today's data-driven world. Here are some guidelines to ensure ethical use of data scraped from IPinfo.io:

Permission First. Before scraping or using the data, ensure you have the necessary permissions. This might involve reaching out to IPinfo.io directly or adhering to their API usage guidelines.

Transparency. If you're using the scraped data for your users or customers, be transparent about the source of the data and how it was obtained.

Data Minimization. Only scrape and store data that is absolutely necessary for your purpose. Avoid hoarding data without a clear use case.

Respect Privacy. Ensure that any data you scrape, especially if it pertains to individuals, respects privacy norms. Anonymize or aggregate data where possible to protect individual identities.

Wrapping Up

Understanding and leveraging IP data is a necessity in today's data-driven world. IPinfo.io is a great tool for those seeking accurate, comprehensive, and actionable IP insights.

As we navigate the complexities of data extraction, it's imperative to approach the practice with ethics, responsibility, and respect.

Whether you're a business, a cybersecurity expert, or a curious individual, don't just be a passive observer.

Dive into IPinfo.io's vast offerings, harness the power of its tools, and be part of the revolution shaping our data-driven future.