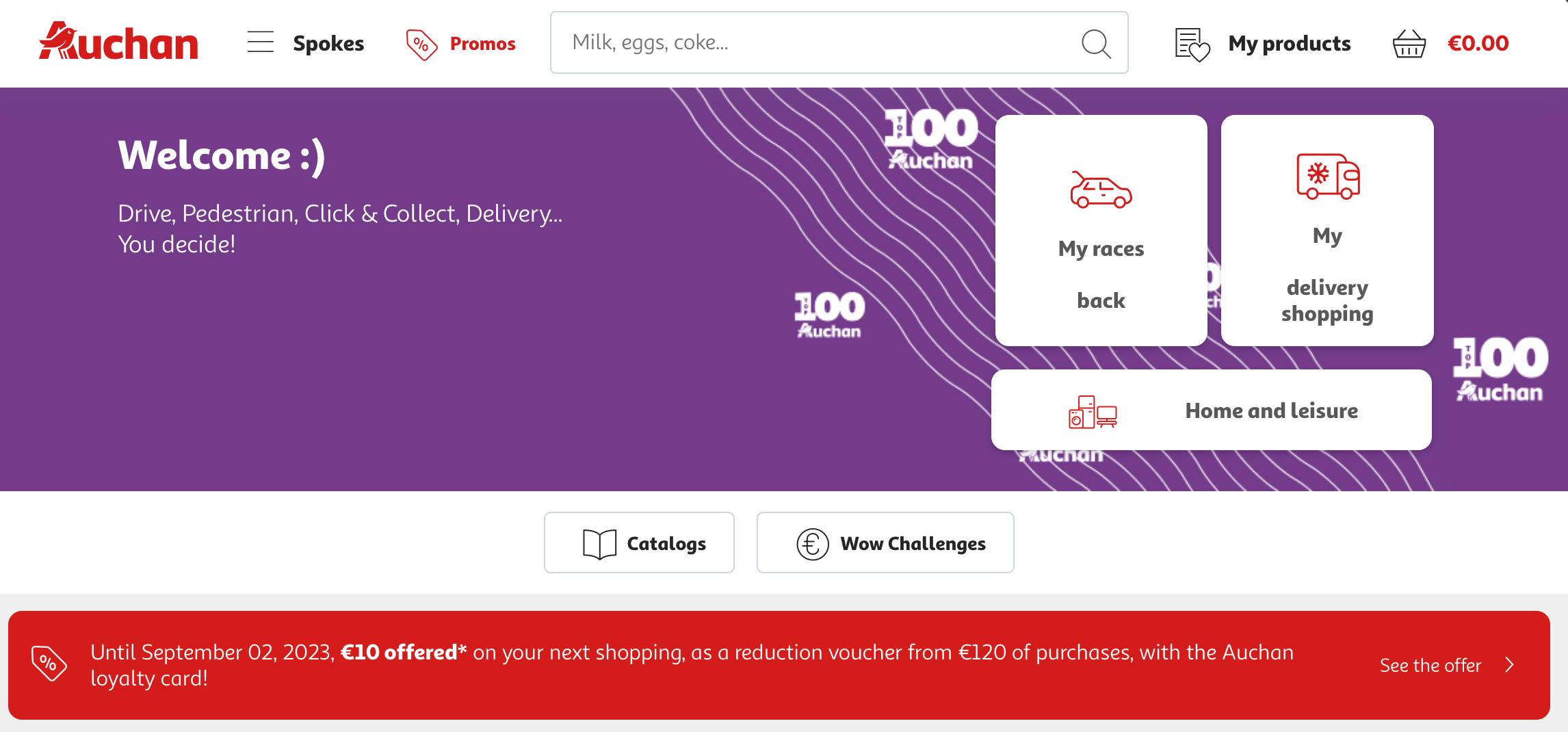

Auchan is a French multinational retail corporation headquartered in Croix, France.

One of the world's principal distribution groups with a presence in 15 countries and 337,800 employees, Auchan operates a chain of hypermarkets, supermarkets, and convenience stores covering various sectors, including food, electronics, home goods, clothing, and more.

Founded in 1961 by Gérard Mulliez, the company has expanded internationally and now has stores in various parts of Europe, Asia, and Africa.

Auchan aims to improve the daily life of its customers by offering a wide range of products and services at affordable prices.

The company also has an online presence through its e-commerce platform, Auchan.fr, which offers a similar range of products as its physical stores and additional services like home delivery and click-and-collect options.

Why Scrape Auchan.fr?

eCommerce data is more than just numbers; it's the backbone of every ecommerce business strategy. Therefore, the importance of scraping online stores such as Auchan.fr for data cannot be overstated.

Scraping data from Auchan.fr, gives you access to a wealth of information that can help you make informed decisions, data such asproduct listings, customer reviews, and pricing strategies, among other things.

With this information at your fingertips, you can better understand market trends, customer preferences, and even your competitors' strategies so you can come up with valuable insights.

Understanding these trends allows you to tailor your products, services, and marketing efforts to meet the specific needs and wants of your target audience.

Competitor Price Monitoring

Price is often the most significant factor that influences a customer's purchase decision.

Therefore, keeping an eye on your competitor pricing trends and strategies is crucial for staying competitive in the market. This is where scraping comes into play.

By scraping Auchan.fr, you can gather real-time pricing data not just for your products but also for similar products sold by your competitors.

You can analyze Auchan pricing data to identify pricing patterns, seasonal fluctuations, and even promotional strategies.

You can then adjust your own pricing strategies to offer better deals to your customers, thereby gaining a competitive edge.

You can also automate this process to get real-time updates, allowing you to react quickly to any changes in the market.

Inventory Management

Managing inventory is a challenging task that requires a lot of precision and planning.

One small mistake can lead to overstocking or understocking, both of which are detrimental to your business.

Scraping Auchan.fr can provide you with valuable data that can significantly improve your inventory management processes.

By scraping product availability and sales data from Auchan.fr, you can gain insights into which products are in high demand and which are not.

By analyzing from Auchan.fr, you can make data-driven decisions to optimize your inventory.

For instance, if a specific product is frequently out of stock on the site, it's likely a high-demand item. This suggests that you should consider increasing your stock levels for that particular product.

On the other hand, if a product has been sitting in the inventory for too long, you might consider running promotions to move that stock.

By leveraging the power of web scraping, you can optimize your inventory to ensure that you always have the right products, in the right quantities, at the right time.

This not only improves customer satisfaction but also reduces the costs associated with holding inventory.

Scraping Auchan.fr provides you with actionable data that can significantly improve various aspects of your eCommerce business, from pricing and inventory management to market research and competitive analysis.

Other Applications of Auchan.fr Data

Data scraped from Auchan.fr can be utilized in a multitude of ways beyond just competitor price monitoring and inventory management. Here are some additional applications:

- Insights into Pricing Trends. By analyzing the historical product prices from Auchan.fr, you can gain valuable insights into pricing trends.

This can help you understand seasonal fluctuations, promotional periods, and even predict future pricing strategies.

- Auchan Drive Analysis. Auchan Drive is a popular service that allows customers to shop online and pick up groceries from designated locations.

By scraping data related to this service, you can understand its popularity, peak usage times, and what products are most commonly purchased, helping you to optimize your own similar services.

- Discounted Price Strategies. Understanding the frequency and types of discounts offered on Auchan.fr can help you formulate your own discounted price strategies.

This is particularly useful for e-commerce stores looking to compete effectively.

- Single Product Focus. If you're an e-commerce store specializing in a single product or a narrow range of products, scraping Auchan.fr can provide you with data-driven insights on how your product is positioned in the market, customer sentiment, and what advanced options or features customers are seeking.

- Variegated Offering Analysis. Auchan.fr has a wide range of products, from grocery items to electronics.

By scraping this variegated offering, you can identify gaps in your own product range and adjust accordingly to meet market demands.

- Customer Sentiment. Scraping customer reviews and ratings can provide you with a wealth of information on customer sentiment.

Sentiment analysis tools can then be used to quantify this data, which can be invaluable for content creation and marketing strategies.

- Content Generation. The product descriptions, images, and customer reviews on Auchan.fr can serve as a rich source for content generation.

Whether it's for your blog, social media, or promotional materials, this data can save you precious time in the content creation process.

- Grocery Stores vs E-commerce. By comparing the types of products that are popular in Auchan's physical grocery stores versus their e-commerce platform, you can gain insights into consumer behavior.

This can inform your own multi-channel retail strategy.

By leveraging these diverse applications of Auchan.fr data, you can gain a comprehensive understanding of the market, enabling you to make informed and strategic business decisions.

Tools You'll Need

Web Scraping Libraries

Choosing the right web scraping libraries is crucial for a smooth and efficient process.

Python is often the go-to language for web scraping due to its ease of use and extensive libraries designed for this purpose.

Some of the most popular Python libraries for scraping Auchan.fr include:

-

Beautiful Soup. This library is excellent for parsing HTML and XML documents. It creates a parse tree that can be used to extract data easily.

-

Scrapy. This is an open-source framework that provides all the tools you need to crawl websites and extract the data. It's highly customizable and can handle a wide range of scraping tasks.

-

Selenium. While not strictly a web scraping library, Selenium is often used when the website has JavaScript elements that need interaction for the data to be displayed.

-

Requests. This library is used for making HTTP requests and can be used in conjunction with Beautiful Soup to scrape static web pages.

-

lxml. This is another library that can parse HTML and XML documents and is usually faster than Beautiful Soup.

By using these Python libraries for scraping Auchan.fr, you can ensure that you have the flexibility and power to extract the data you need.

Geonode Proxies

Web scraping often involves making multiple requests to a website, which can lead to your IP address getting blocked.

This is where premium proxies come into play. Geonode proxies can make your scraping activities more efficient and secure in the following ways:

-

IP Rotation. Geonode proxies allow you to rotate IP addresses, making it difficult for websites to block you.

-

Speed. Geonode offers high-speed proxies that can make your scraping process faster.

-

Anonymity. Using Geonode proxies ensures that your original IP address is hidden, providing an extra layer of security.

-

Geographical Targeting. Geonode allows you to use proxies from specific countries, which can be useful if the website has geo-restrictions.

-

Rate Limiting. By using multiple proxies, you can bypass rate limits set by websites, allowing you to scrape data more efficiently.

Essential Browser Extensions

While libraries and proxies form the backbone of your scraping operation, browser extensions can also be incredibly useful tools. Some Chrome extensions for web scraping that can enhance your scraping experience include:

-

Web Scraper. This extension allows you to plan out your scraping tasks and execute them directly from your browser.

-

Data Miner. This is another user-friendly extension that can scrape data from web pages and export it into various formats.

-

Scraper. This is a simple yet effective extension for scraping data from websites and exporting it to Google Sheets.

-

Instant Data Scraper. This extension can automatically scrape tabular data from web pages and export it into a CSV file.

The right tools can make or break your web scraping project. By choosing the appropriate Python libraries, using Geonode proxies for added efficiency and security, and leveraging useful browser extensions, you can ensure a successful and hassle-free scraping experience.

Setting Up Your Environment

Installing Required Software

Before you can start scraping Auchan.fr, you need to have the right software in place. The software setup for scraping Auchan.fr generally involves installing a programming language, libraries, and possibly a development environment. Here's how to go about it:

Python Installation

- Download Python. Visit the official Python website and download the latest version suitable for your operating system.

- Install Python. Run the installer and follow the on-screen instructions. Make sure to check the box that says "Add Python to PATH" during installation.

Library Installation

After installing Python, you'll need to install the libraries that you'll use for web scraping. Open your command prompt or terminal and run the following commands:

- For Beautiful Soup:

pip install beautifulsoup4

- For Scrapy:

pip install scrapy

- For Selenium:

pip install selenium

- For Requests:

pip install requests

- For lxml:

pip install lxml

IDE (Optional)

While not strictly necessary, using an Integrated Development Environment (IDE) like PyCharm or Visual Studio Code can make your coding experience much more comfortable.

By completing this software setup for scraping Auchan.fr, you'll have all the essential tools you need to start your web scraping project.

Configuring Geonode Proxies

Using proxies is crucial for a successful web scraping project, as they help you bypass rate limits and avoid IP bans. Geonode proxies are a reliable choice for this purpose. Here's a step-by-step guide on setting up Geonode proxies for web scraping:

-

Sign Up for Geonode. Visit the Geonode website and sign up for an account. Choose a plan that suits your needs.

-

Access Dashboard. Once your account is set up, log in to your Geonode dashboard.

-

Configure IP Rotation. Enable IP rotation in the settings. This will automatically rotate your IP address at intervals, making it harder for websites to block you.

-

Choose Geolocation. If you need to scrape data from a specific geographical location, select the appropriate country from the list.

-

Generate Proxy List. After configuring your settings, generate a list of proxy IPs and ports.

-

Integrate with Your Code. Finally, integrate the Geonode proxies into your scraping code. For Python, this often involves using the requests library and supplying the proxy details in the proxies parameter.

By following these steps, you'll have Geonode proxies configured and ready to make your web scraping project more efficient and secure. With the right software and proxies in place, you're now fully equipped to start scraping Auchan.fr.

The Scraping Process

Identifying Data Points

Before diving into the code, it's crucial to identify what data you want to scrape from Auchan.fr. Knowing what data points you're interested in will guide the rest of the scraping process.

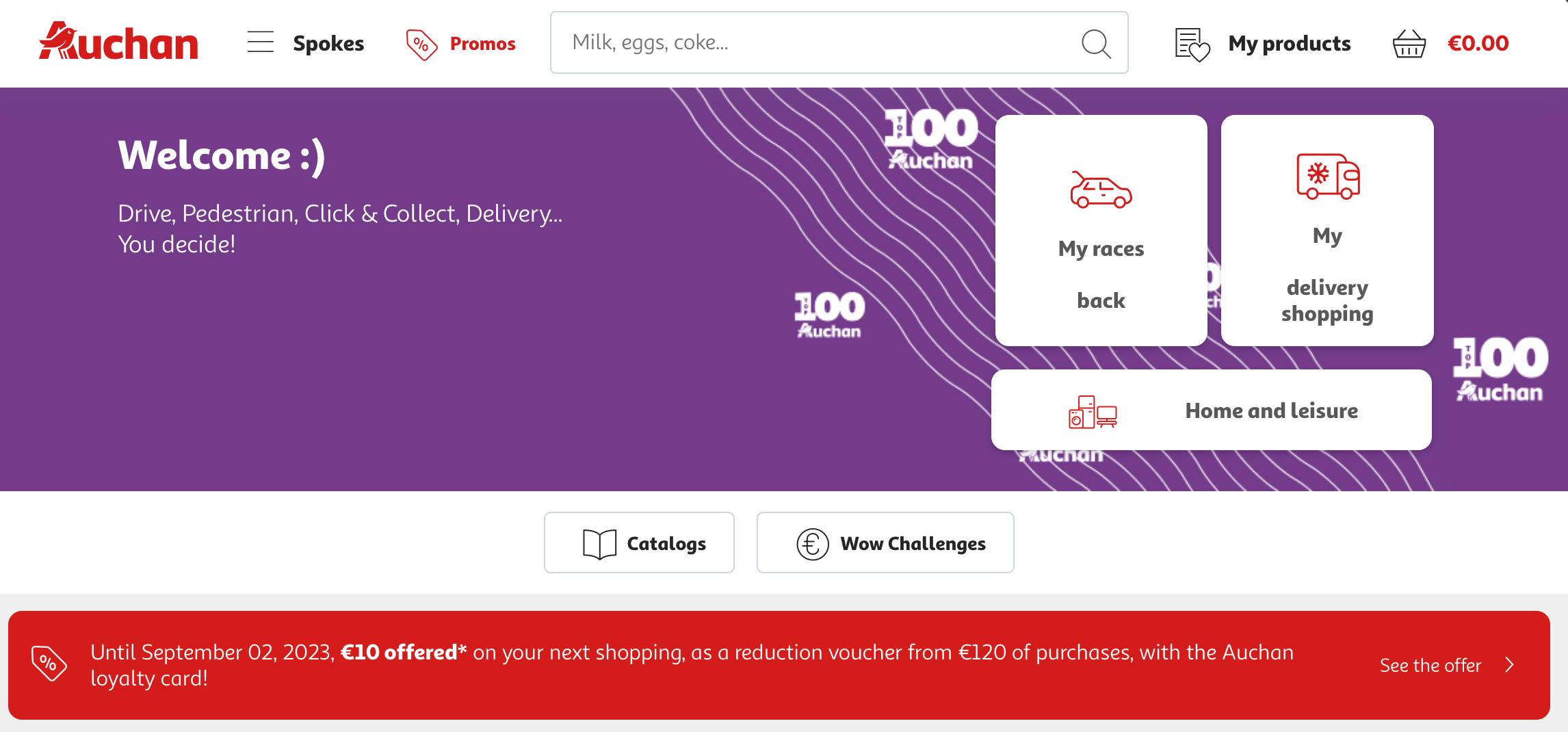

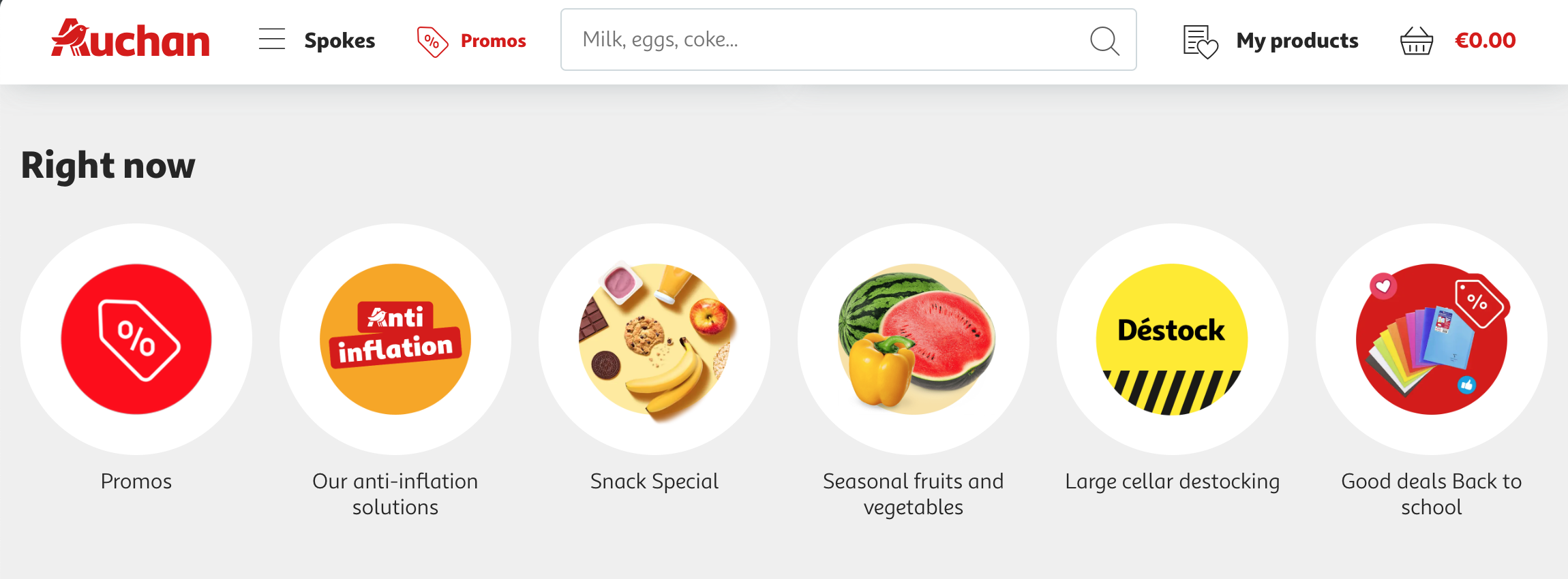

The Auchan.fr website is a comprehensive e-commerce platform that offers a wide range of products, from groceries to electronics, home appliances, and more.

The website is organized into various sections, including promotions, specific product categories, and seasonal offers.

It also provides options for different types of delivery methods such as Drive, Piéton, Click & Collect, and home delivery.

Types of Data That Can Be Scraped

-

Product Information. Details about products, including their product names, descriptions, and prices, can be scraped. This is valuable for competitor price monitoring and inventory management.

-

Promotional Offers. Information about ongoing promotions, discounts, and special offers can be gathered. This can provide insights into pricing trends and help businesses understand how to offer competitive prices.

-

Customer Reviews. Reviews and ratings given by customers for specific products can be scraped for sentiment analysis. This data can offer insights into customer sentiment and preferences.

-

Catalog Information. The website features various catalogs that can be scraped to understand the variegated offering of products in different seasons or for special events.

-

Delivery Options. Information about the different delivery methods like "Drive, Piéton, Click & Collect" can be scraped. This is particularly useful for businesses looking to understand the logistics and delivery methods of competitors.

-

Stock Availability. Data about the availability of products can be scraped. This is crucial for inventory management and to understand market demands.

By determining what data to scrape from Auchan.fr, you can tailor your scraping code to target these specific elements, making the process more efficient.

Writing the Scraping Code

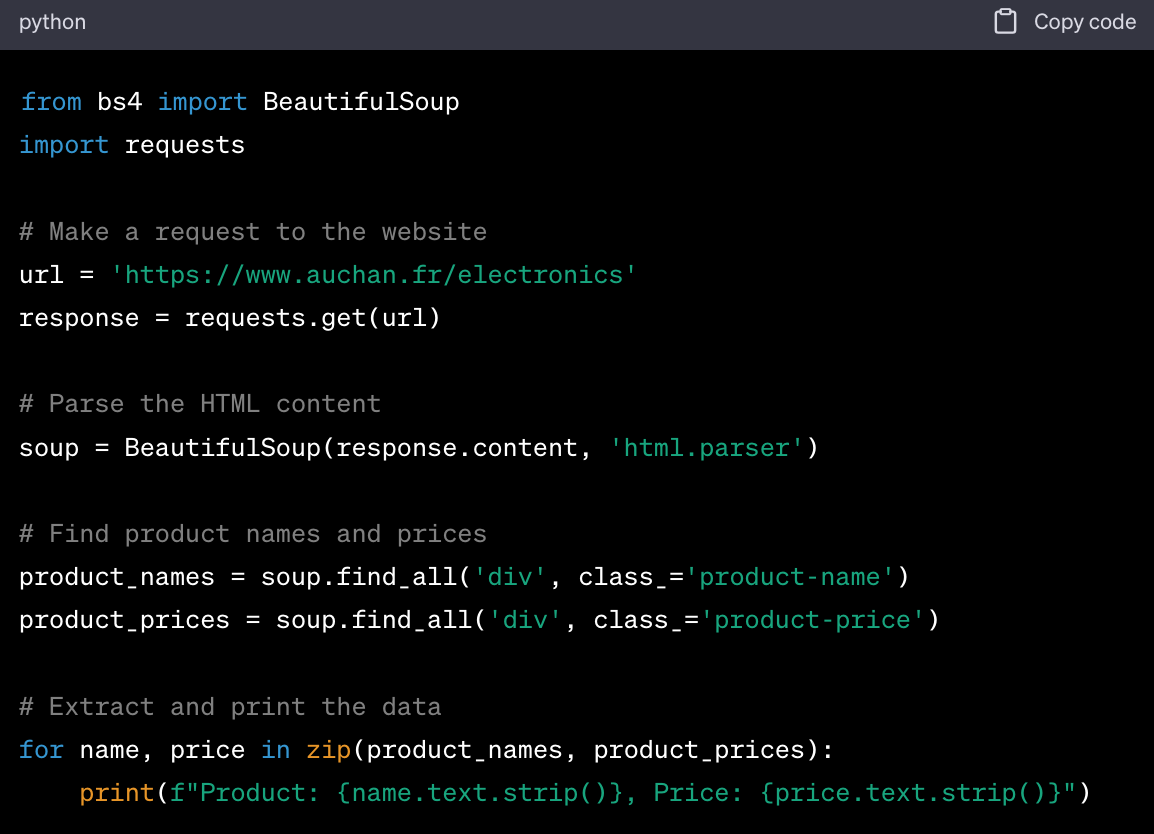

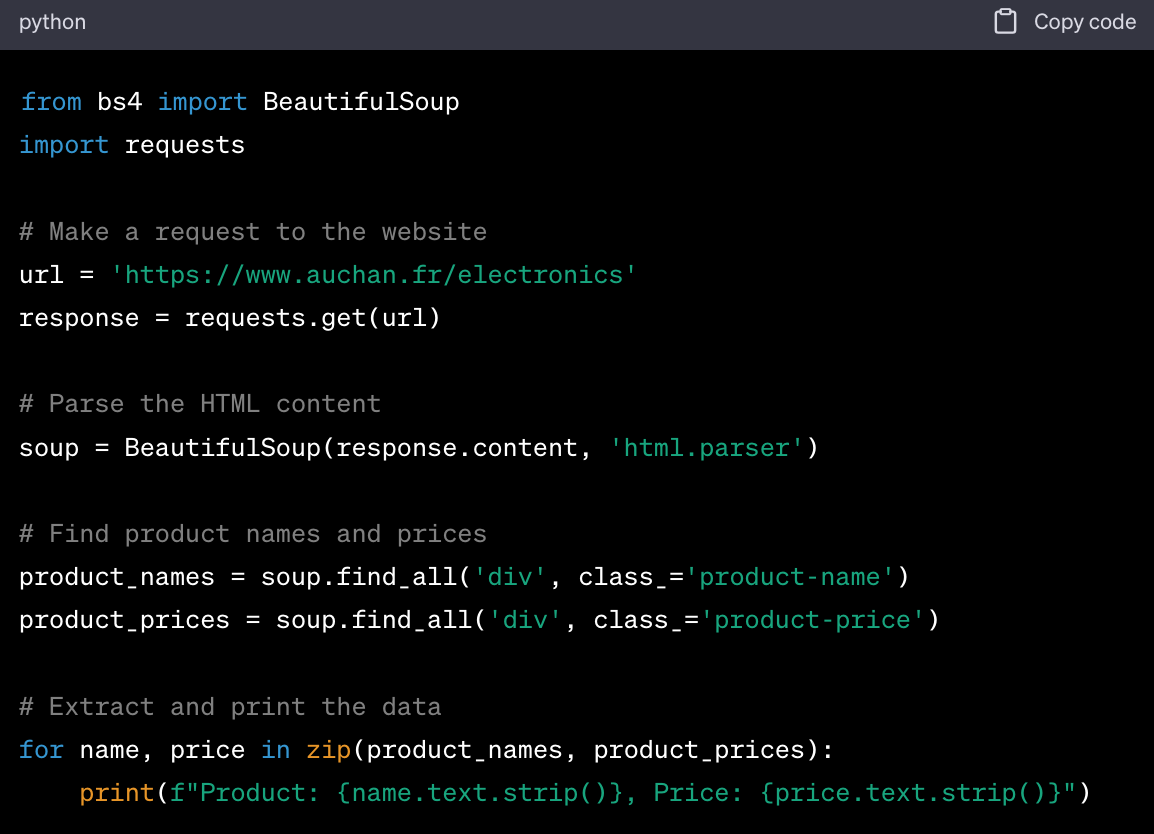

Once you've identified your target data points, the next step is to write the code that will extract this data. Python is commonly used for web scraping, and libraries like Beautiful Soup and Scrapy are particularly useful for this purpose.

Here's a simple Python code example for scraping product names and prices using Beautiful Soup:

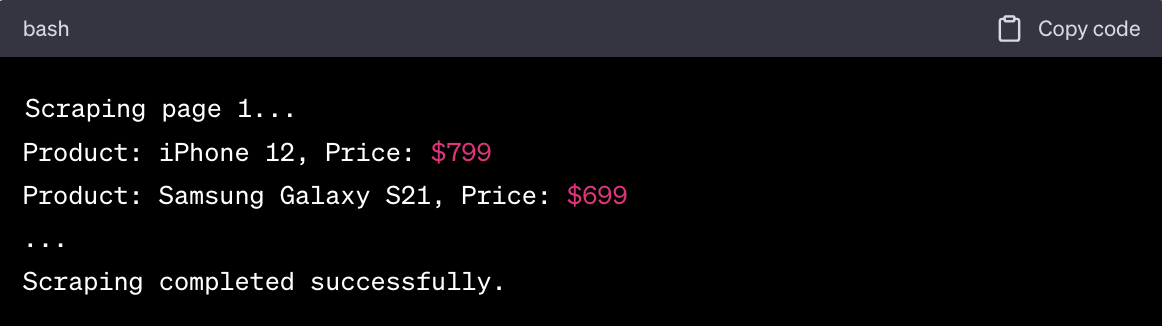

Running the Scraper

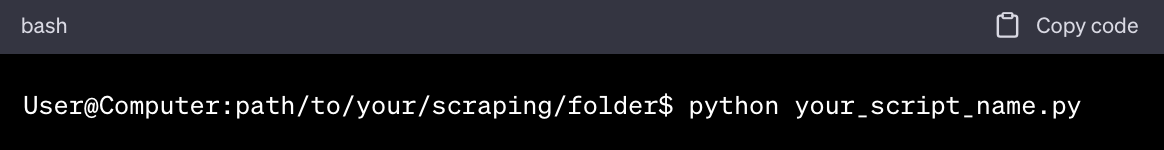

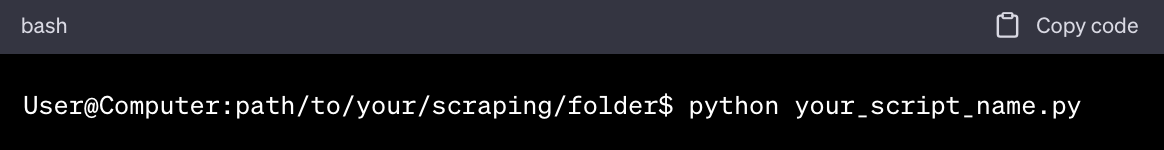

After writing your scraping code, the final step is to run the scraper to collect the data. Here's how to execute your scraping code and collect data:

- Open Terminal or Command Prompt. Navigate to the folder where your scraping code is saved.

- **Run the Code.

**

**

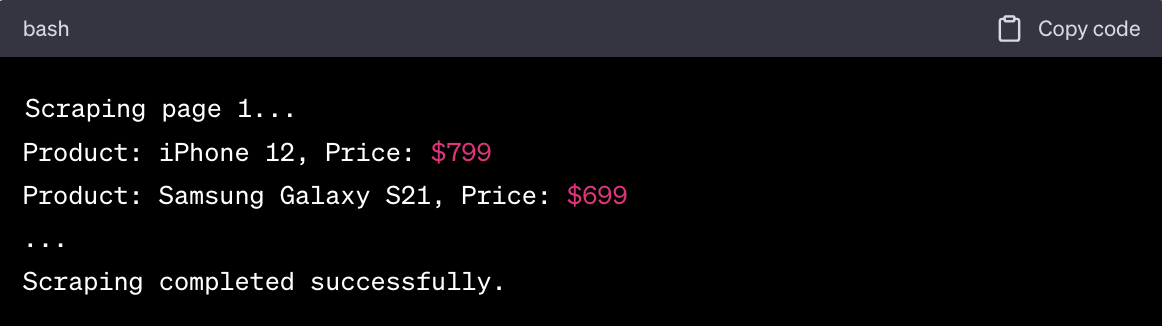

- Monitor the Output: Watch the terminal to ensure that the scraper is running as expected. Any errors or issues will be displayed here.

- Collect and Store Data: Your scraper will collect the data and, depending on your code, may save it to a file such as a CSV or a database.

By following these steps, you'll be able to execute your scraping code and collect valuable data from Auchan.fr. Make sure to always respect the website's terms of service and robots.txt file to ensure that your scraping activities are ethical and legal.

Best Practices and Legal Considerations

Respecting Robots.txt

Before you start scraping any website, it's crucial to check and respect its robots.txt file. This file contains guidelines on what you can and cannot scrape. For Auchan.fr, you can find their robots.txt file at Auchan.fr robots.txt.

The Auchan.fr robots.txt guidelines specify which user-agents are allowed or disallowed and which paths are off-limits for scraping. Always adhere to these rules to ensure that your scraping activities are ethical and in compliance with the website's policies.

Rate Limiting and IP Rotation

Web scraping often involves making multiple requests to a website in a short period, which can trigger rate limiting or even result in your IP being banned. This is where Geonode proxies come in handy.

-

IP Rotation. Geonode proxies allow you to rotate your IP addresses, making it difficult for websites to block or rate-limit you.

-

Speed and Efficiency. Geonode offers high-speed proxies that can make your scraping process faster and more efficient.

-

Geographical Targeting. If you need to scrape data from a specific geographical location, Geonode allows you to use proxies from that particular country.

By using Geonode proxies, you can bypass rate limits and scrape data more efficiently, ensuring that your web scraping activities are both effective and ethical.

Data Storage and Usage

Once you've scraped the data, the next consideration is how to store and use it. The ethical use of scraped data is paramount, as misuse can lead to legal repercussions.

-

Secure Storage: Always store the scraped data in a secure environment, such as an encrypted database.

-

Data Minimization: Only collect and store data that is essential for your purpose. Excessive data collection can lead to ethical and legal issues.

-

User Consent: If the data involves personal information, make sure you have the necessary consents before using it.

-

Compliance: Ensure that your data storage and usage comply with data protection laws like GDPR if you're dealing with data from European citizens.

By adhering to these best practices and legal considerations, you can ensure that your web scraping activities are both effective and ethical. This not only protects you legally but also builds trust with your users and stakeholders.

Troubleshooting and FAQs

Common Errors and Solutions

When scraping Auchan.fr, you may encounter several issues that can disrupt your data collection process.

Troubleshooting Auchan.fr scraping issues is essential for a smooth and efficient scraping experience. Here are some common errors and their solutions:

Error 1: 403 Forbidden

Cause: This usually means that the website has detected your scraping activity and has blocked your IP address.

Solution: Use Geonode proxies to rotate your IP address and bypass the block.

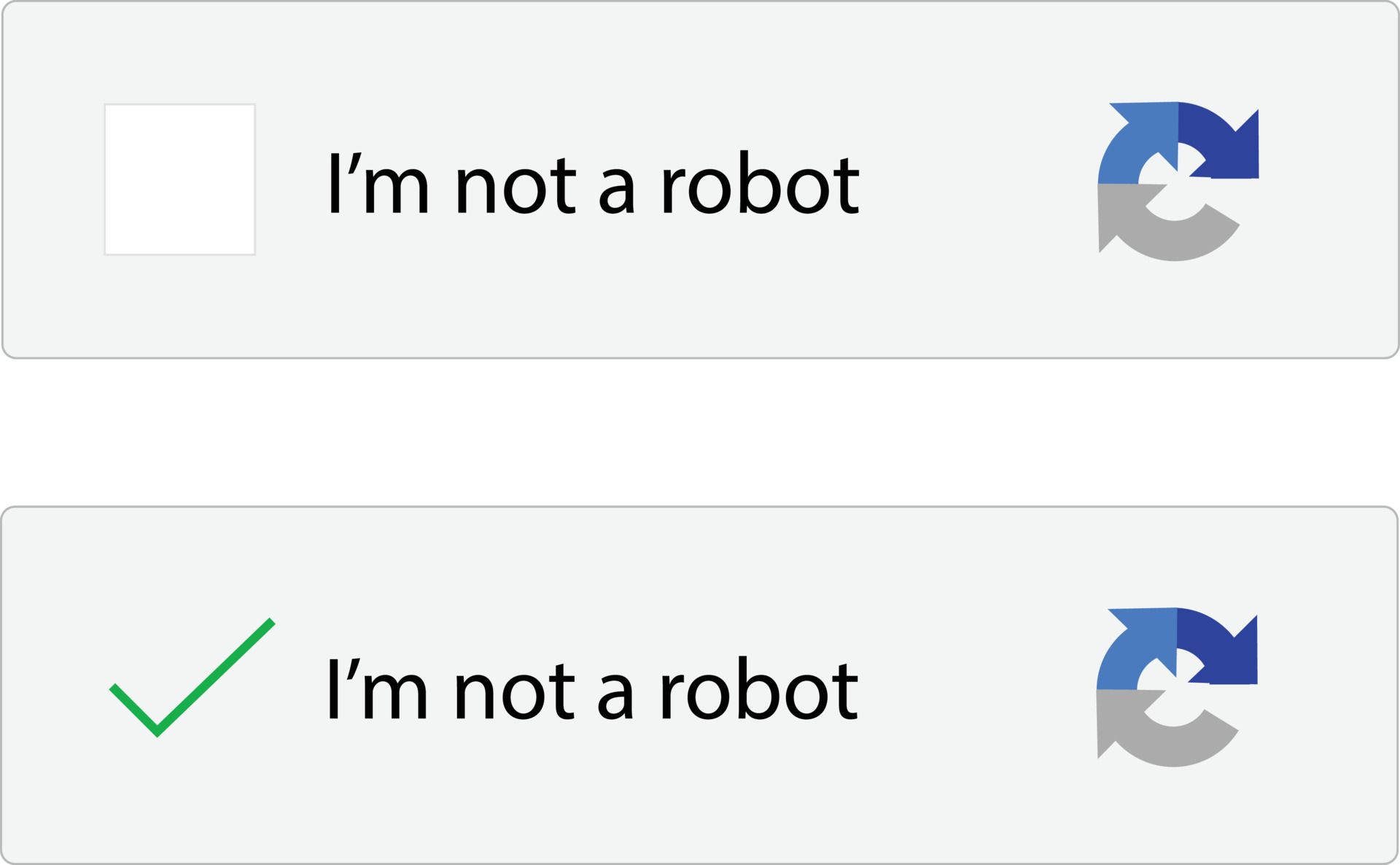

Error 2: CAPTCHA Challenge

Cause: The website has implemented CAPTCHA to prevent automated scraping.

Solution: You can either solve the CAPTCHA manually or use a CAPTCHA-solving service. Some advanced scraping libraries also offer automatic CAPTCHA solving.

Error 3: Incomplete Data

Cause: The website may use AJAX to load content, and your scraper might not be capturing this dynamic content.

Solution: Use a library like Selenium that can interact with JavaScript elements to ensure you capture all the data.

Error 4: Rate Limiting

Cause: Making too many requests in a short period.

Solution: Implement rate limiting in your code to control the frequency of your requests. Using Geonode proxies can also help in this regard.

People Also Ask

How to scrape Auchan.fr without getting blocked?

To scrape Auchan.fr without getting blocked, it's advisable to use proxies for IP rotation, respect the website's robots.txt guidelines, and implement rate limiting in your code. Geonode proxies can be particularly useful for avoiding blocks.

Is scraping Auchan.fr legal?

Scraping Auchan.fr is generally considered legal as long as you adhere to the website's terms of service and robots.txt guidelines. However, scraping personal data without consent can lead to legal repercussions.

How can I scrape Auchan.fr faster?

To scrape Auchan.fr more quickly, you can:

- Use high-speed Geonode proxies for faster data retrieval.

- Optimize your code to reduce the time taken for each request.

- Use multi-threading to make multiple requests simultaneously.

By addressing these common questions and troubleshooting issues, you can make your Auchan.fr scraping project more efficient and effective. Always remember to scrape responsibly and ethically.

Wrapping Up

Scraping Auchan.fr offers a plethora of opportunities for businesses, data analysts, and marketers alike.

Whether it's for competitive pricing analysis, inventory management, or market research, the data you collect can serve as a goldmine of insights.

By adhering to ethical guidelines and using the right tools and techniques, you can scrape Auchan.fr efficiently and effectively.

The importance and benefits of scraping Auchan.fr cannot be overstated, as it allows you to make data-driven decisions that can significantly impact your business strategy.

Next Steps

Once you've successfully scraped data from Auchan.fr, the journey doesn't end there. Here's what you can do next to leverage this data for business success:

-

Data Analysis. Use data analytics tools to process and analyze the scraped data. Look for patterns, trends, and insights that can inform your business decisions.

-

Competitive Pricing. If you've scraped pricing data, use it to adjust your own pricing strategies to stay competitive in the market.

-

Inventory Planning. Use product availability data to optimize your own inventory levels. Stock up on items that are frequently out of stock on Auchan.fr, as these are likely to be in high demand.

-

Customer Engagement. Use scraped customer reviews and feedback to improve your products and services. This can also help you understand what customers are looking for, allowing you to tailor your offerings accordingly.

-

Marketing Strategies. Use the data to identify gaps in the market or to fine-tune your marketing campaigns. For example, if a particular product is receiving rave reviews, consider running special promotions around it.

-

Legal Compliance. Always ensure that the data you've collected is stored securely and is in compliance with data protection laws, especially if you're dealing with personal information.

By taking these next steps, you can turn the data you've scraped into actionable insights, driving your business towards greater success. Remember, the key to effective web scraping is not just collecting data, but also using it responsibly and strategically.

**

**